Sound is everywhere around us, when we speak, when we listen to music, when we hear traffic noise and even when everything seems to be silent. But what is sound actually? We need to understand this phenomenon before we are able to store it on a machine and process it.

Sound is a mechanical wave that travels in an elastic medium. Mechanical wave here means, that material particles are involved and the wave results from particles’ interaction. Elastic medium on the other hand is anything that contains particles with the ability to interact. The simplest example is, of course, the air, but an elastic medium may be any other gas, liquid (like water) or even concrete. You probably know, that sound cannot propagate in space-that’s because there are no particles there to interact.

A source of any sound is a vibrating element. The simplest one you can think of are your own vocal cords. When you speak, you thighten them and as a result of tension being applied by the air flowing through them, they start to vibrate in a rhythmic manner. This initial vibration causes in turn the air to vibrate, i.e., the vibrating element hits the air particles at a particular spot at more or less equal intervals, which in turn causes these particles to hit other ones, which in turn hit the next ones and so on.

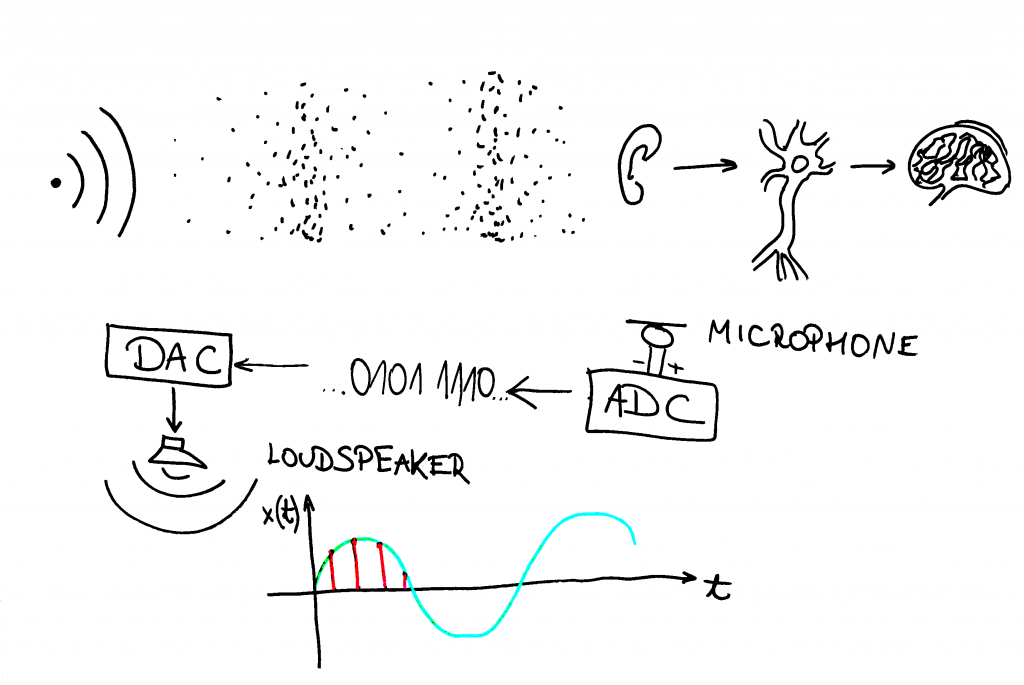

How do you hear then? Well, the propagating wave (groups of particles hitting against the others farther and farther from the source) reaches the tympanic membrane in your ear what causes it to vibrate as well. In a series of resulting interactions of various ear elements the vibration is transformed into a neural signal transported into the brain, where it is interpreted. It is of course a simplified description, but for now it suffices.

The sound as a mechanical wave travelling through the air medium and reaching receivers: an ear and a microphone. Audio processing (by human body or a machine) follows.

The sound as a mechanical wave travelling through the air medium and reaching receivers: an ear and a microphone. Audio processing (by human body or a machine) follows.

The same mechanism is used to capture the sound through a microphone. The diaphragm of the microphone vibrates under the incident particles what is transformed into electrical voltage at microphone’s output. Such output may be then recorded and stored.

Whatever the medium is, the sound preserves its characteristics of a wave. The vibrations are changes of placement of the vibrating object. In the air the vibrations are transported by more and less dense groups of particles. At microphone’s output the vibration is the rising and falling voltage.

Whatever the type of these vibrations, we can always call it a sound wave and plot it against time. The abscissa of such a plot is usually time in units of second or millisecond. The ordinate of such a plot may be pressure (in pascals), voltage (in volts) or displacement from the equilibrium (in meters). However, on a computer, we can assign it an arbitrary unit, because it merely represents the notion of amplitude.

To store the continuous (analog) representation of sound on a computer, we need to transform the signal into the digital domain. To do this, we use the notion of sampling, i.e., recording the value of the analog signal at regular time intervals. Sampled sound may be then processed and played through loudspeakers, that in turn change the digial signal into analog one in the form of voltage, that causes the membrane of the speaker to move in a rhythmic manner, that in turn moves the air particles, what enables us to hear the sound again, thus completing the circle of sound-record-store-process-output-sound.

The topic of sampling and digital representation will be discussed in the next week’s article, so stick around!

Comments powered by Talkyard.